Tracing National Climate Policy Implementation from Policy Documents using NLP

March 25, 2022

Emily Robitschek, Manuel Kuhm, Erik Lehmann, Maren Bernlöhr, Christian Merz

Experience from the Hackathon with SDSN and GIZ at ETH Zürich Hack4Good

By Emily Robitschek

The 26th UN Climate Change Conference of the Parties (COP26) in 2021 was filled with promises of governmental action to help tackle climate change.

But who tracks these claims and how? In global politics, nations constantly publish commitments to international agreements. The tracking of the policy implementation of these commitments allows countries to assess and be held accountable for their progress (or lack thereof) towards a specific goal. Policy coherence “entails fostering synergies across economic, social and environmental policy areas; identifying trade-offs and reconciling domestic and international objectives; and addressing the spill-overs of domestic policies on other countries and on future generations1.” The ability to measure coherence can facilitate the identification of unique and efficient opportunities appropriate for each country and context.

However, tracking and tracing the actual policy implementations by these countries is time-consuming and not systematically done, hampering accountability and sustainable progress.

The problem of tracking implementation and coherence is difficult in part because we lack direct measures of either. The existing measures are inherently fuzzy, constructed concepts that must be assessed through a combination of indirect evidence. Currently, policy experts carefully comb through documents looking for words relevant to the policy goal of interest, the context in which they are mentioned. For instance, perhaps upon inspection, a country’s climate change goals are most often mentioned in the context of preserving biodiversity and creating opportunities for economic progress. This is a laborious and time-consuming process that makes it challenging to keep pace with the complex realm of international policies in an actionable way.

Data science for policy tracking

During the Hack4Good program at ETH Zurich, we tested the feasibility of data science approaches to make this policy tracking process more manageable for policy analysts. Hack4Good is a program that matches data science talent from ETH Zurich with non-profit organizations for eight weeks to create data-driven solutions to increase these organizations’ impact. Diverse academic backgrounds were represented within our Hack4Good team: before starting my master’s degree in Science, Technology and Policy, I studied biochemistry and conducted cancer research. John studied computer engineering, Paul was in a chemistry Ph.D. program, and Raphael had just finished his Ph.D. in cosmology. We brought together our different experiences and strengths and were curious about applying our data science skills to help solve a social problem.

During our project, we collaborated with the GIZ Data Lab and the Sustainable Development Solutions Network (SDSN) to develop a system to track the implementation and coherence of policies related to Nationally Determined Contributions (NDCs) promised as part of the Paris Climate Agreement to mitigate the progress and impacts of climate change.

Engineering a solution

We had regular meetings to discuss and work together on different aspects of the problem and conceptualize what tool would be most helpful to the analysts. At first, it was difficult to prioritize which direction to take, but feedback from the GIZ Data Lab team helped us set a direction. There are two main approaches that our team could have adopted: unsupervised methods and supervised methods. An unsupervised approach where the computer learns a representation of a particular topic could be powerful and rely on less manual tweaking by the analyst. Raphael, Fran, Johnathan and I all worked on this in different ways initially. But for our core method, we ended up opting for a semi-supervised framework in the form of semantic searching and visualizations to provide more flexibility and clarity about what our method searched for, and what it might miss. Over the eight weeks, our team used python tools and existing natural language processing packages like spacy and tensorflow to develop a method with several ways for searching for keywords to contexts - from simple search to an approach leveraging a pre-trained neural network. The tool then visualizes wherein the document these contexts are concentrated. Cooccurring keyword topics can be added for comparison and to understand the coherence of the policies. The mention of financial commitments or certain partner organizations was taken as a further indicator of the actual intention to implement. The method can be applied to “score” a corpus of documents for words related to NDCs or other policy topics and rank them for the policy analyst.

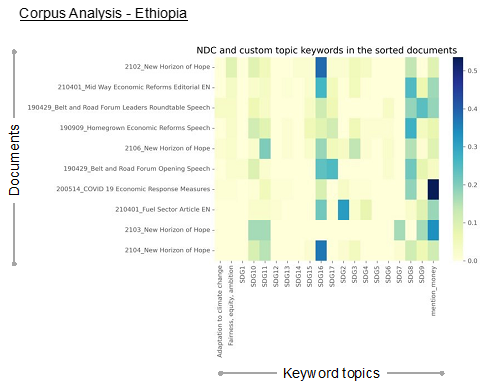

The heatmap shows the frequency scores for different keyword categories (on the x-axis) for a sample of documents from Ethiopia (on the y-axis). Keywords are input as a simple file for automatic matching and searching within the documents. Categories can be related to sustainable development goals (SDGs), determined by the policy analyst based on their domain knowledge and the problem context (highlighted in red) or based on stated policy goals like NDCs (highlighted in blue).

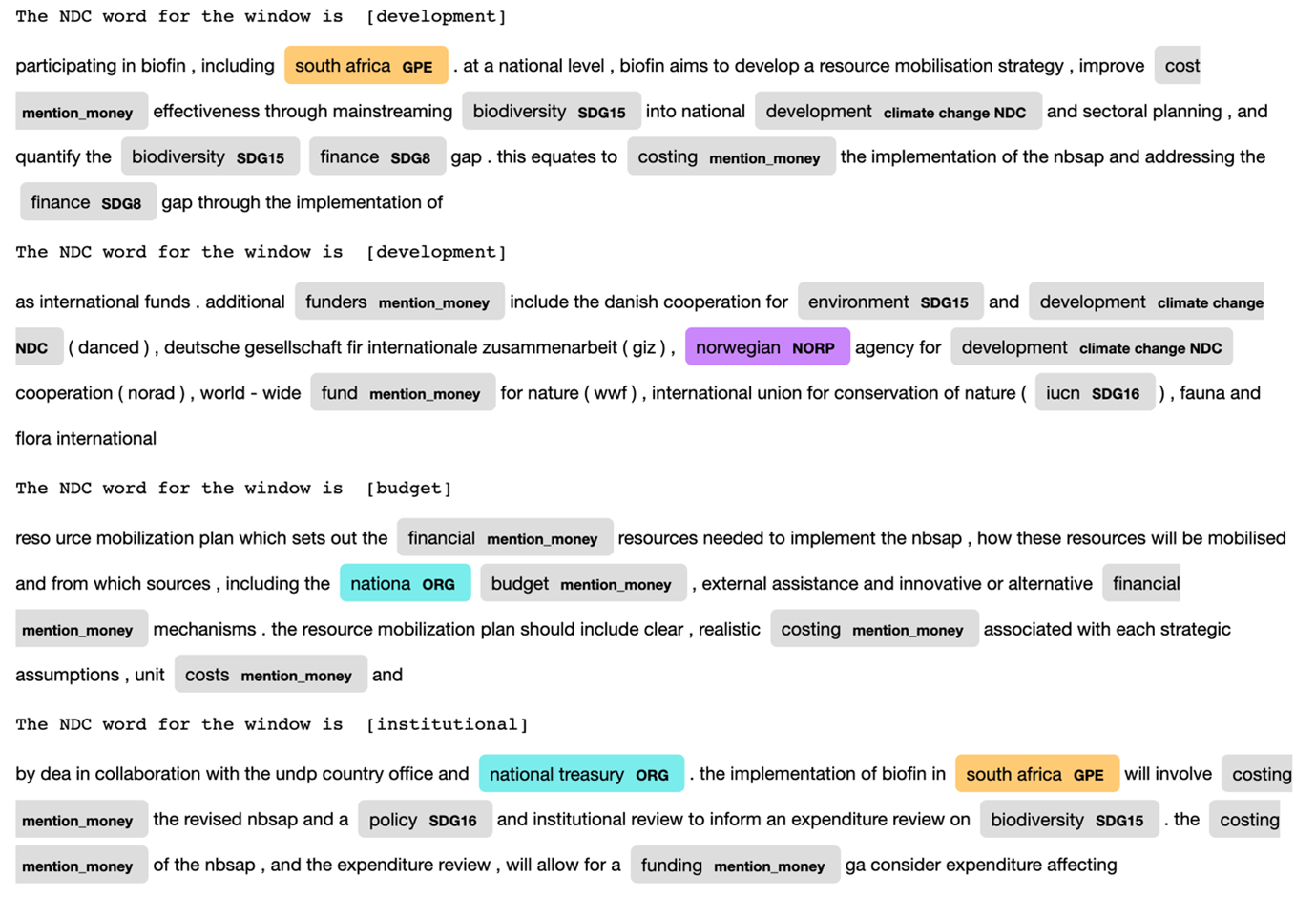

Within documents, our method can single out key sections and visualize them so the policy analyst can see what sections of the document match the context related to the policy goals and topics of interest and why the method found that section to be important.

The picture shows an example of document text around NDC keywords that also scored highly for mentions of money-related words. Even in these small examples, one can derive important information about donors and specific numerical investments, funding collaborations and international partnerships, and state and non-state partnerships and priorities.

All in all, the method we developed is not yet ready to automate the whole process, but it has potential as an interpretable, timesaving, and customizable tool for policy analysts. Without much technical knowledge, they can know exactly what it is doing, customize the words and context they search for based on their problem of interest and prioritize documents and document sections to look at in their evaluation of policy implementation and coherence. My hope is that policy analysts can use our tool to spend less time searching for key phrases in documents and more time thinking deeply about what to do about those problems and opportunities that are revealed to them.

Along the way

Even over the brief course of the Hack4Good program, our team grew a lot. It was exciting to discuss and iterate during the Hack4Good hacknights and hackdays, and I personally gained some useful exposure to certain software engineering practices and the latest and most popular tools in the field of Natural Language Processing (NLP) from my teammates, our industry mentor Fran, and our Hack4Good mentor Gianluca Mancini.

Some of the most entertaining and informative moments were the workshops coordinated between Hack4Good and other partners. In one workshop we learned about impact analysis from a social-economic expert before using that approach to frame our projects in break-out-groups. In another, we discussed different ways of organizing data science workflows and built Lego towns to learn about Agile project management approaches commonly used in software development. Paul’s insights from the Pitch Workshop were invaluable to our final presentation.

It was a privilege to learn from my fellow classmates, and to work with the hard-working and creative people from the GIZ Data Lab and SDSN. I was continually impressed by their thoughtfulness, approachability and the quality of their communication and organization. I had such a fun time working with them as they helped frame the problem while giving us the room to innovate our own solutions.

In the end, I was surprised and delighted to find unexpected intersections between the type of thinking I had cultivated as a cancer researcher and the type required for this data science project in a very different domain. I’m looking forward to building on the lessons I learned from this project and continuing to grow my understanding of the larger systems that impact our lives on this planet and what ways data-driven approaches can be a part of the solution.